Implementing Quantum-Resistant Encryption

Practical advice for choosing solutions, avoiding pitfalls, and future-proofing your systems

In earlier articles I looked at the quantum threat to current encryption, some of the myths and misconceptions that come up in discussion of the risks and solutions, and how to get started on addressing the threats. This article moves on to look in more detail at what you should do - in particular how to choose the appropriate solutions, to implement them effectively, and to future-proof your systems so that they are sustainable.

The key message of the earlier articles was that sufficiently capable quantum computers could eventually undermine security that relies on current common asymmetric cryptography, which is mainly used for protecting data in transit and for digital signatures that verify the authenticity of another party. The best solution is to implement Post Quantum Cryptography (PQC) - something that actually involves no quantum technology, but instead implements new encryption algorithms that are designed to resist quantum attack while still able to run on existing conventional computers.

Although a quantum computer that could present such a credible threat (often called a “cryptographically relevant quantum computer”) is several years away, organisations should not wait to start planning their response. There is a significant body of work required to identify where vulnerable cryptography is used, to decide what matters most, and then to deliver multiple complex ICT projects to make the required changes. Until you start assessing the risk and scoping the work, you cannot make a sensible judgment on where it fits in your organisational risk register and when each phase of work needs to start.

Note: this article goes into a lot of technical details to help practitioners - if you’re not that interested in the details, feel free to skim down to the conclusions section!

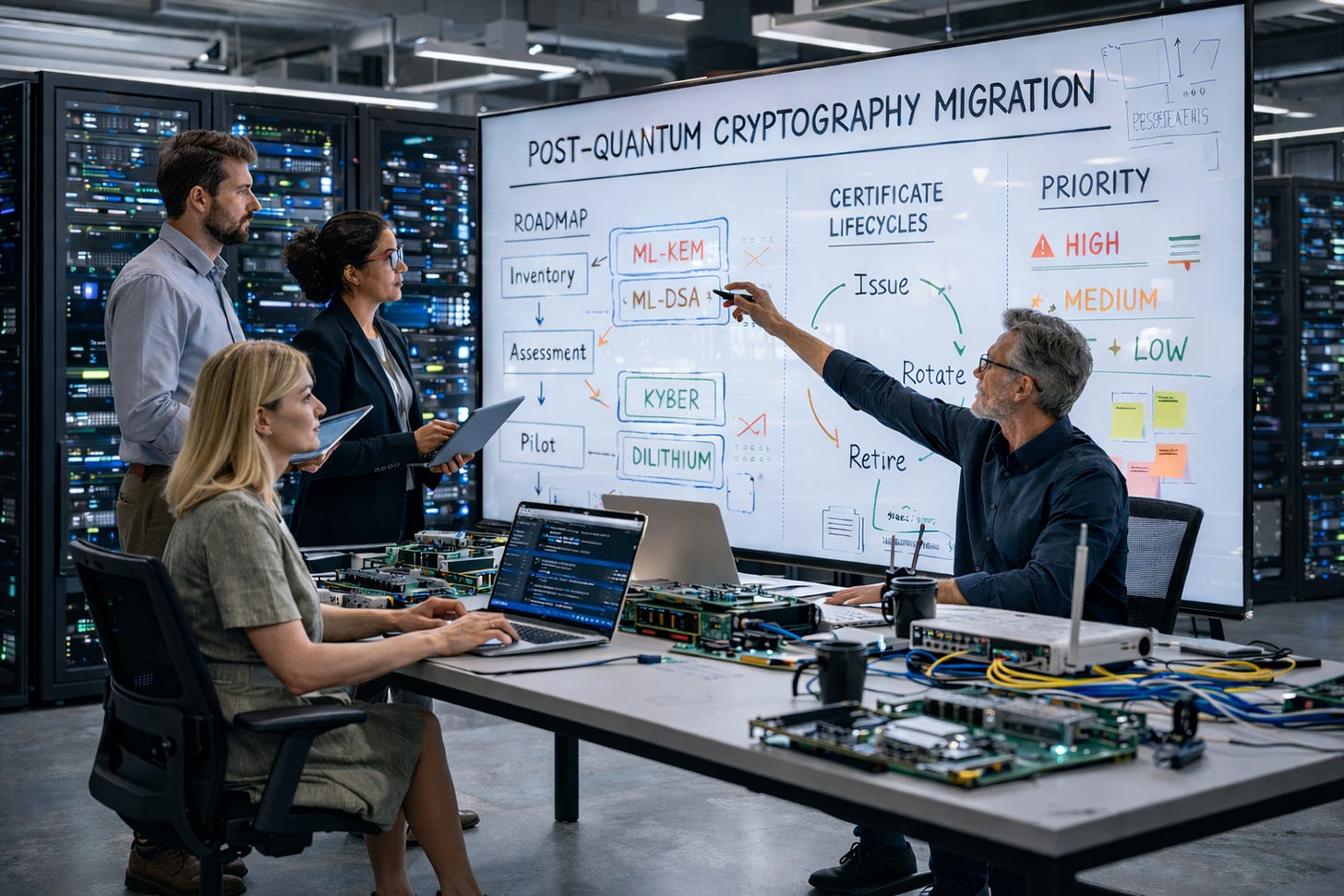

As outlined in the earlier getting started guide, the critical first step is to locate what data and systems are potentially at risk because they use quantum vulnerable encryption. This needs to be followed by assessing the risks of different systems, and then organisations need to ruthlessly triage, both in terms of quantum risk, but also as part of your overall cyber security risk profile and improvement plans.

Planning is everything

The outcome of this initial assessment process should be a high-level plan of which systems to tackle and when. Each of these will be a project in its own right, and will need further planning to identify inputs, outputs, dependencies, resources and subtasks. I’ve seen estimates that, for a large enterprise, a full program plan could have 100,000 or more tasks. While this may be plausible if you go deep enough into the detail, I’d suggest that, if you’re developing a detailed plan with 100,000 tasks today, you’re probably putting your effort in the wrong place.

The value of your high-level plan is as a guideline for when to start planning each phase in more detail and when to begin executing them. Lots of things can change between now and then, including your own risk profile and the relative priority of different systems. The act of planning is vital, but remember that plans always change, especially on first contact with reality, or as Mike Tyson put it:

Everyone has a plan 'till they get punched in the mouth

So the important thing is to plan now to the level of detail you need for the decisions you’re making now. Otherwise, making a beautiful 100,000 task plan may turn into something you spend more time maintaining than using!

Choosing the right solution at the right time

Picking a cryptographic algorithm

When deciding how to upgrade your systems to remove the dependency on quantum vulnerable encryption, it is important to pick the most appropriate solution. As previously discussed, you should ignore the myths and focus on implementing post-quantum cryptography (PQC). PQC is not actually a quantum technology, but different maths that can generally run on the hardware you already have.

Although many algorithms have been proposed over the years, the only rational choice today is those that have been standardised by the US National Institute of Standards and Technology (NIST), which have been through an open competition process and are becoming widely accepted and implemented. The two most relevant NIST standards are:

FIPS 203: Using the “ML-KEM” algorithm (actually based on something called “lattice cryptography”) for establishing encryption keys, as a replacement for current use of schemes such as RSA-2048 and elliptic curve cryptography used to protect data in transit (eg secure connections from a web browser to a website)

FIPS 204: Using “ML-DSA” based on similar lattice cryptography concepts, for digital signatures that similarly rely on public key encryption, to verify the authenticity of another party and/or the content they provide

For Australian organisations, in particular, the Australian Signals Directorate (ASD) recommends following these two standards. They define different options depending on the key length, but organisations should generally aim to implement the strongest levels - referred to as ML-KEM-1024 and ML-DSA-87 - as their long term solution.

The “hybrid” option

Although the lattice cryptography standards have been developed and heavily scrutinised over many years, and are now part of the NIST formal standards, some people are concerned that they might have unknown vulnerabilities, even against current conventional computers. After all, at quite a late stage of the NIST competition, it was found that one of the other candidates could be broken in a few hours simply using a laptop computer.

In fact, there’s always been a theoretical possibility that someone could break our current RSA and elliptic curve cryptography using today’s computers without needing a quantum computer. However, they appear to have stood the test of time - no one has found such a weakness in the algorithm (or at least they’ve kept it very quiet if they have). The concepts of lattice cryptography have been around for a long time, but not widely used, maybe there is a greater chance of a vulnerability being found in them.

To allow for this risk, NIST have prepared other standards using different mathematical concepts for signatures, and there are plans for further standards using other types of encryption (although it’s taking a while to find viable options). These variants can act as alternatives if there are problems found with the lattice-based cryptography in FIP 203 and FIPS 204 at a later date.

Notwithstanding, FIPS 203 and FIPS 204 are the standards being widely implemented now, and we shouldn’t delay in doing so because of such theoretical risks. However, a potential mitigation is a “hybrid” or “double encryption” approach. Such a solution combines a traditional algorithm with a post-quantum one and can offer some reassurance while confidence in new implementations matures. But that comes at a cost: more complexity, more processing and bandwidth overhead, and potentially an extra migration step later to phase it out once you have more confidence in lattice cryptography. ASD’s current guidance does not recommend post-quantum traditional hybrid schemes, although it does not prohibit them, and the decision for each organisation to make on a case-by-case basis.

Potential challenges

Although PQC is “just” new maths that runs on conventional hardware and doesn’t require quantum technology, it is not always a simple drop-in replacement. The algorithms are more complex, and because we don’t have as much experience, there is an increased risk of implementation mistakes that can create vulnerabilities of their own. Where possible, avoid trying to roll your own encryption software, use proven solutions or reputable, well-tested libraries in your software. Once implemented, monitor for updates and patches as the system goes through initial shakedown as they get widely deployed, and be ready to apply these as soon as possible.

Another challenge is that they can be more resource-intensive than existing cryptographic algorithms. There had been some concerns that they could take more compute cycles to calculate, but interestingly, benchmarking using modern CPUs shows that the decryption speed is similar, and the encryption speeds are actually faster than existing algorithms. This may not be the case for specialised systems with more basic processors, such as small Internet of Things type devices, so you may need to do some benchmarking.

ML-KEM-1024 keys are around 6 times larger than RSA-2048 keys, while the ML-DSA-87 digital signatures can be of order 100 times bigger than current methods. This might only be a few 10s of KB, but this can add up when storing large numbers of certificates, or again become critical in smaller systems with limited memory and/or network bandwidth. That means practical engineering matters - you need to understand the resource implications for the systems you care about and the behaviour of constrained or performance-sensitive systems. Even where the overhead is expected to be manageable, it still needs to be measured rather than assumed.

Interestingly, ASD has suggested that weaker variants of ML-KEM and ML-DSA that have smaller key sizes and certificate sizes can be used but systems should be upgraded to full strength by 2030. This has caused some confusion but appears to be intended to allow interim solutions to be implemented earlier than 2030 while allowing time for any required hardware upgrades to run the full strength algorithms. However, given 2030 is an arbitrary deadline anyway, and the aim of implementing PQC should be long term security, it is probably better to focus on what may be needed to run the algorithms that are recommended for the long term, i.e. ML-KEM-1024 and ML-DSA-87. Interim weaker solutions can be considered as a last resort.

What’s the best approach to changing encryption?

Although there are many companies offering all sorts of potential solutions normally the best solution is the simplest. The preference should always be to get an upgrade from the vendor, or for software you’ve developed in-house, integrate reputable, well-tested libraries into your software.

However, there will be systems where an upgrade simply is not possible. Perhaps the vendor is not providing patches, or perhaps you no longer have access to the source code. In those cases, the best long-term answer is usually replacement with a new supported system that either already uses quantum-resistant cryptography or has a credible upgrade path to get there.

However, if replacement is not practical, and you have also determined that the system cannot simply be retired, then the choices become harder. You may end up isolating the system from other networks and accepting some residual risk, or routing the traffic over a link with additional encryption. You might also look at products that can act as a proxy to add another layer of encryption, or as a gateway to perform certificate-related functions. But these options will usually introduce extra latency, extra cost, and another dependency to manage. Therefore they should only be used as a last resort, and ideally phased out over the longer term to avoid ongoing technical debt.

“Crypto-agility” - or being ready for next time

Another consideration is that, where you have the option, this is a good time to think about upgrading in a way that makes your system “crypto-agile”. This has become a bit of a buzzword, but it simply means trying to make it as easy as possible if you have to make any future upgrades to your encryption methods. After all, you’ll probably have found this quite a complicated and taxing process, and there’s no guarantees that there won’t be another event in the future that might require going through this all over again.

In practical terms, that means avoiding designs in which algorithms, key sizes, certificate assumptions or protocol choices are hard-coded deep into applications, interfaces, procurement specifications, or operational processes. It also means documenting where cryptography is used, what it is doing, and what it depends on, so that future change is based on evidence rather than rediscovery.

Test, test, and test again

When implementing a solution, don’t forget to test thoroughly - not only the straightforward cases, but interoperability with other systems and other organisations - and all the awkward edge cases.

In practice, testing should cover much more than “does it work in the lab?” It should include interoperability across vendors and versions, performance under realistic load, certificate lifecycle processes, failure handling, monitoring, logging, rollback, and recovery.

This matters because cryptographic changes often fail not in the core algorithm, but at the boundaries: between products, between organisations, and between old assumptions and new implementations.

Ongoing program governance and communication

This is not just a technology refresh. It is a long-running change programme involving architecture, procurement, risk management, operations, vendors, integrators, and business owners. This means that in reality it won’t be a nice linear process - there will be a need to regularly revisit and review the assessment and triage steps .

It also means that communicating with and educating all stakeholders will be important. If people only encounter PQC when a change request lands on their desk, the programme will feel like an arbitrary burden. If they understand the rationale, the priorities, and the likely timescales, they are much more likely to make sensible decisions and support the work. This applies not only to your internal teams. You will also need to co-ordinate with external organisations to ensure interoperability is maintained.

In conclusion….

Locating your cryptography, assessing your risk and triaging which systems were a priority to upgrade was just the start. The act of planning is vital, but make sure your plan is agile and easily updated as we can guarantee things will change. As you move into execution of the plan, the details will need to be worked out, and there may be some unexpected surprises that make you revise your priorities.

The two most important principles to bear in mind are:

“simple is better” - more complex fancy-sounding solutions will take longer and have a bigger risk of introducing unexpected vulnerabilities - so are the potential benefits only theoretical or are they tangible enough to justify the effort?

Testing is critical: make sure you test the system works as expected and how it copes with the unexpected. Involve internal and external parties in the testing process, and includebenchmarking non-functional requirements such as CPU load, network latency etc to avoid surprises once real-life usage ramps up.

The good news is that — whatever the scarier headlines would have you believe — you are not working against an imminent deadline. A quantum computer capable of breaking RSA-2048 is several years away. But there is several years’ worth of work to be done, the skills and experience needed are currently scarce, and starting early gives you options. Starting late does not.

As ever: plan proportionately, act methodically, and ignore the fear and hype. The quantum threat to encryption is real. It is also entirely manageable — if you start now.

MDR Quantum helps organisations to understand and assess their quantum risk and to respond accordingly. Our services include executive briefings, policy development, risk assessment and PQC migration strategy and planning - please reach out if you’d like to learn more about how we may be able to help.